Big Data in fraud detection and online marketing – Phoenix Meetup

Presenter: Raz Yalov, CTO, 41st parameter

This was one of the best, simple and clear presentation on Big data I have attended.

The presenter distinguishes big data clearly as a challenge of data of size greater than petabytes and data, which we cannot eyeball. We need complex infrastructures only when the regular server cannot handle the processing complexity.

It was interesting to know the difficulties most of the big data analytics companies have with data privacy concerns. The company goes to extend of giving special offers to customers who can provide access to their logs or even real-time sample data to improve the predictive abilities of the analytics system.

Once the customers accepted to sharing the data, the company receives the data using API’s, which can be stored into two different storage sources. In-house Hadoop clustered system or Amazons S3 system. The recommended compression formats was .lzo + .lzo.index.

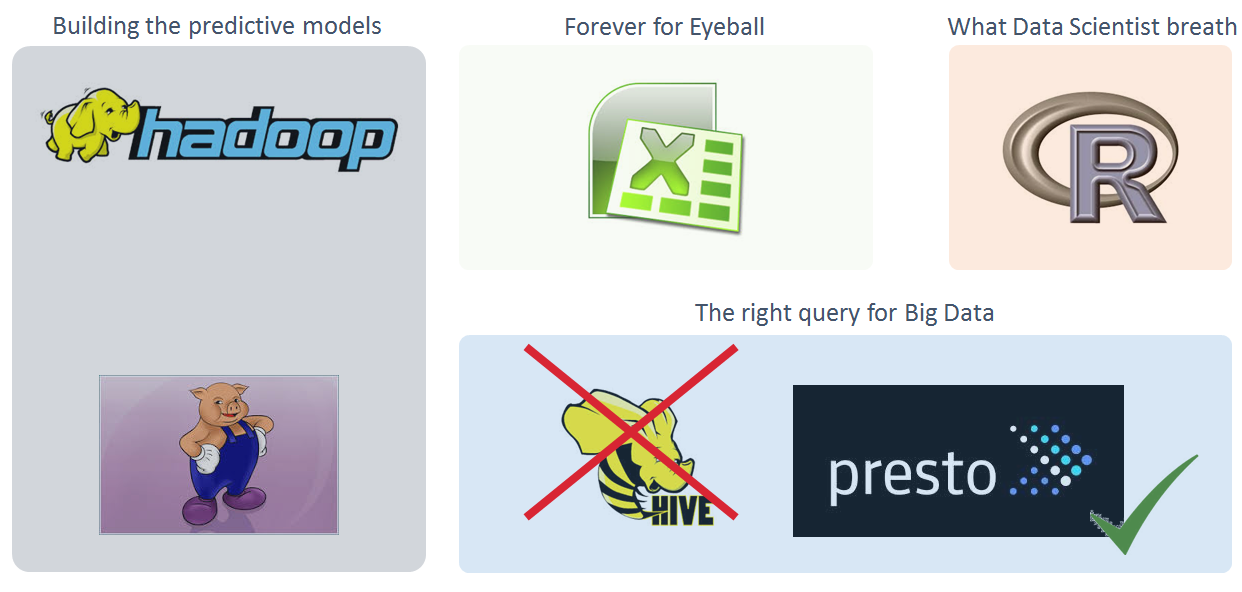

The presenter verdict on various big data technology. The company uses technologies like Pig, Hadoop map reduce, Presto, excel and R programming. Pig and map reduce is used mostly to create the predictive models and excel has been the best resource for eyeballing purposes. The team consist of data scientist who loves R programming, using it as much as possible even if it is not built for that purpose. There was definitely a big dislike towards Hive for its performance issues and connectivity management abilities.

The biggest challenging with Big Data is getting the data itself from the customers and once collected, the next big challenge is to extracting value from it. The sampling processes is one of the major challenges since the level of garbage data present is high.

Looking forward to see how visualization can be injected to such a complex challenging environment.

yes raz is very unik and present the topics issus by simple wards understandbel by evryone